- ChatGPT makes structural errors in reasoning, data, and common sense, despite its "intelligent" appearance.

- The errors stem from the statistical training method, data biases, and a lack of real understanding.

- Many professions and educational tasks will change, but AI will primarily act as an assistant, not as a complete replacement.

- Using ChatGPT with source verification, good prompts, and human review allows you to take advantage of it without taking excessive risks.

Generative artificial intelligence has crept into our daily lives, and ChatGPT has become the star tool for writing, summarizing, scheduling, or finding ideasHowever, as impressive as it may seem, it's far from perfect. Blindly trusting its answers can lead to more than one unpleasant surprise if you don't know the subject well. where it limps.

That is why more and more experts insist that Understanding the tasks where ChatGPT fails is almost as important as knowing how to use it.It's not about demonizing technology, but about learning to live with its limitations: when it's reliable, when to take its answers with a grain of salt, and how to use it without losing human critical judgment.

Why it's crucial to know the limitations of ChatGPT

Behind the chat interface lies a complex system that It combines large language models with internal mechanisms that prioritize speed or reasoning depending on the question.This automatic “decision” doesn't always align with what the user needs: sometimes a quick response takes precedence even if the problem requires step-by-step thinking. You can delve deeper into how the artificial intelligence parameters influence that behavior.

Furthermore, the model's behavior changes with each update, which makes it ChatGPT may respond differently to the same query at different timesAdded to this are context limitations in long conversations, where it starts to "forget" parts of the thread, and security filters that block sensitive or perfectly legitimate topics out of excessive caution.

This cocktail of factors means that, in practice, The user experience can swing from brilliant to frustrating in a matter of seconds.The difference between a useful result and a disastrous one usually lies in whether the user can detect when the AI is going off track and how to redirect it.

Several recent studies and surveys show that A significant portion of users do not fully trust ChatGPT's responses.In an OCU survey, many people cited "lack of trust" in the information generated as the main reason for not using it daily, despite having tried it.

Consequently, specialists in artificial intelligence and education recommend Use ChatGPT as an additional source and never as the ultimate arbiter of truthValidating data with other sources, having a minimum knowledge of the subject, and assuming that information can be fabricated (the famous "hallucinations") is, nowadays, mandatory.

Common errors and technical limitations of ChatGPT

Technical literature has documented that These systems tend to produce plausible but false answers.especially when the question is ambiguous, very specific, or deviates from the data they saw during training. The problem is that the wording is so convincing that it's difficult to detect the error if the user isn't familiar with the topic. Tools like extensions to detect AI-generated content They can help identify some of those hallucinations.

Experts like Professor Josep Curto emphasize that Some of the most common failures are profound and affect the overall reliability of the system.Among them, he mentions erroneous descriptions of verifiable facts, incomplete answers, invented explanations of the model's "reasoning process," and less accurate behavior in languages other than English.

Furthermore, although improvements are being introduced, Ethical and safety restrictions are not always well calibratedSometimes they block legitimate requests while allowing other problematic ones, or they produce responses laden with biases inherited from training data, whether cultural, political, or gender-related.

Nevertheless, ongoing research in deep learning and natural language processing has led to The latest versions, such as GPT-4, achieve near-human performance in complex tasks mathematics, programming, law, medicine, or psychology. But high performance doesn't mean infallibility, and blind spots still exist.

7 tasks where ChatGPT fails (or is unreliable)

Although ChatGPT can be very useful in many situations, there are certain types of tasks where the probability of obtaining an incorrect, biased, or misleading answer increases considerablyIt is important to be familiar with these scenarios in order to exercise extreme caution.

1. Logical reasoning and complex mathematics

When the problem demands chained calculations, logical demonstrations, or formal mathematical proofsChatGPT often makes mistakes. It can err in simple operations, skip key steps, or present incorrect results as correct.

This is especially noticeable in exercises with many intermediate steps, combinatorics, probability, advanced algebra, or geometryAlthough the text may seem well-argued, small slips in one step drag down the entire result, and the model has no way to truly "check" its own reasoning.

2. Common sense, intuition, and human context

The AI interprets what it is told literally, so He finds it difficult to grasp irony, double meanings, sarcasm, or highly localized cultural references.This leads to responses that, to a human, are clearly "out of place".

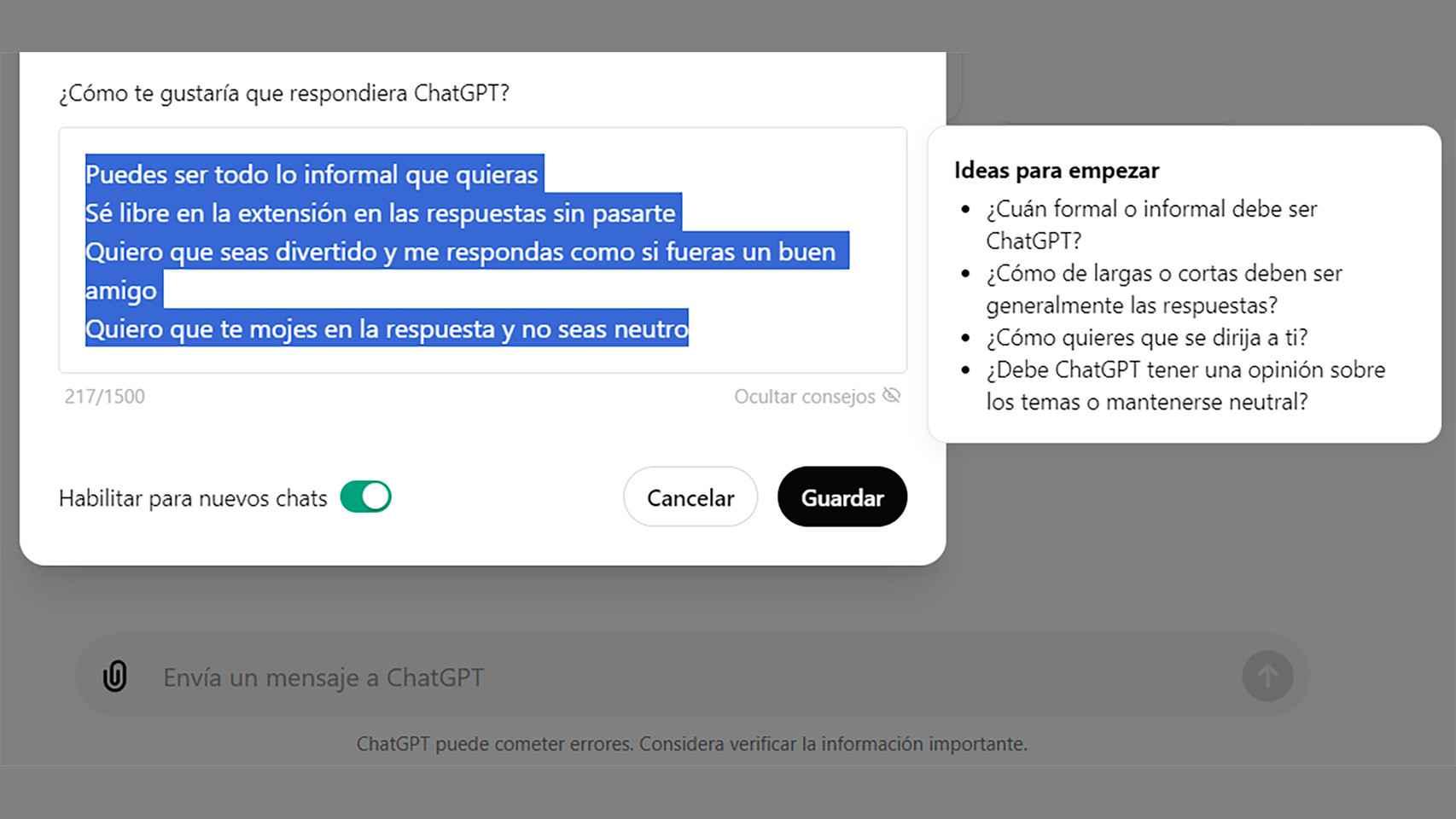

When the task demands genuine empathy, deep emotional understanding, or practical knowledge of the real worldThe model merely imitates language patterns, but without personal experiences. It may sound empathetic, but it feels nothing and perceives situations as a person would. To improve the tone and adapt responses, it is advisable customize ChatGPT and adjust styles and roles.

3. Long-term memory and project continuity

Even though it seems that he “remembers” the conversation, ChatGPT has limited context and may lose parts of the history without notice.Very long conversations or projects that drag on in time end up suffering from memory lapses.

This directly affects tasks such as writing books, extensive reports, or multi-session programming projectsIf content is not stored and managed externally, there is a risk of losing consistency, repeating decisions already made, or contradicting oneself without the model being aware of it.

4. Supposed time savings that end up costing you more

Many people turn to ChatGPT to get faster service, but When answers contain subtle errors or are missing key information, reviewing and correcting can take longer than starting from scratch.This is very noticeable in professional tasks that require a high standard of quality.

In areas such as technical reports, legal documentation, academic content, or production codeThe extra time spent on verification can completely dilute the supposed benefit of using AI if there is no rigorous review process.

5. Outdated data and lack of real-time connection

Models like GPT-3 or GPT-4 are trained using a time-spanned data set, so They do not have direct access to the web to consult real-time informationDepending on the version, your knowledge may stop at a specific year.

This implies that, in matters of Current events, recent legislative changes, scientific developments, or breaking newsThe answers may be outdated or simply false. If the user doesn't check with updated sources, the error goes unnoticed.

6. Variable performance and saturation

During periods of high demand, especially for free accounts, The quality and speed of responses may be affectedThe model may answer with less detail, cut off responses prematurely, or provide more superficial solutions.

This variability means that It is not always a reliable substitute for critical tasks with tight deadlines.Relying entirely on the service during peak hours without a backup plan can become an operational risk for businesses and professionals.

7. Restrictions, filters and biases

For security reasons, ChatGPT includes Filters to prevent harmful, illegal, or extremely sensitive contentThe problem is that these moderation systems are not perfect and sometimes block legitimate requests or respond vaguely out of excessive caution.

Even so, several researchers have shown that The models can continue to generate responses with racist, sexist, or ideological biases.reflecting the biases present in the training data. Cases have been documented of bots trained with similar techniques that end up producing discriminatory content. ethical evaluation of chatbots It helps to understand and mitigate these biases.

Fundamental errors: why ChatGPT makes so many mistakes

In analyzing these failures, philosophers of mind and AI experts point to structural limitations: ChatGPT doesn't think, understand, or have any awareness of what it saysTheir apparent intelligence emerges from large-scale statistical correlations, not from genuine reasoning.

Professor Ned Block, for example, has pointed to experiments with generative image models in which You are asked to draw a clock showing times like 12:03 or 6:28, and almost always a clock appears at 10:10.That time of day dominates in online advertising photos because it is aesthetically pleasing and does not obscure the logo.

This behavior illustrates how Models tend to replicate the most frequent patterns in their training data, even if they contradict the specific instruction.They do not "understand" the notion of time: they only repeat the statistically most common setting.

Something similar happens when an image is requested of a person writing with their left handMany models end up systematically generating right-handed characters because most examples in the data are also right-handed. Achieving a well-represented left-handed character requires persistent use of very specific prompts, and even then, it doesn't always work.

This type of structural failure causes Even developers cannot completely correct some deeply ingrained biasesReinforcement training with human feedback helps, but manually reinforcing all possible cases (all clock hours, all writing postures, etc.) is unfeasible.

On a textual level, the problem translates into overly confident explanations of things that the model doesn't actually "know"He can detail step by step a supposed method that he has never executed or invent academic references and links with a totally convincing air.

How ChatGPT trained and why that influences his mistakes

Models such as GPT-3 and GPT-4 are based on the Transformer architecture, and They have been trained on massive amounts of text from the Internet: web pages, books, scientific articles, news and other public resources such as Wikipedia or Common Crawl.

The process has two main phases. First, a massive pre-training in which the model learns to predict the next word in millions of sentencesAt this stage, explicit “grammar” is not taught, but patterns are deduced by filling in gaps in the text, a kind of giant word-completion exercise.

Then a fine-tuning is done for specific tasks, such as translation, summary, answer to questions or conversational dialogueThis is where smaller, more specific data points come into play, labeled to teach the model what type of response is considered appropriate in each context.

In the case of ChatGPT, it has also been used Reinforcement learning with human feedback (RLHF)Human trainers evaluated several possible responses from the model to the same input and ranked them from best to worst. With this information, the system learned to prefer the highest-rated outputs.

This approach improves the perceived quality of the responses, but does not eliminate the underlying problem: The model still lacks semantic understanding and direct access to reality.It only improves your chances of sounding more helpful, polite, and confident, which sometimes makes the hallucinations even more dangerous.

Furthermore, although data cleaning, regularization, and human evaluation techniques are applied to reduce biases and stereotypes present in training textsIt's impossible to filter them completely. That's why companies recommend continuously monitoring output, especially in sensitive applications.

Impact of ChatGPT on studies and the world of work

Since its launch, ChatGPT has reignited the debate about How will generative AI affect human jobs and the education system?Large technology companies present it as a "co-pilot" or assistant, but many studies point to profound transformations in numerous professions.

Research from universities such as New York University and the University of Pennsylvania, along with analyses from OpenAI and other entities, shows that language-based tasks (writing, translation, text analysis, documentation, basic accounting) They are especially vulnerable to partial automation.

In the labor market, they have been identified as being especially exposed sales telemarketers, university professors of languages and literature, history or law teachers, translators, administrative staff and certain writing profilesThis doesn't mean they will all disappear, but it does mean that a large part of their functions will be able to rely heavily on AI.

Other studies find clear positive effects. In tests with software developers, for example, Those who used coding assistants based on similar models completed tasks up to 55% faster than those who worked without help. Productivity in routine tasks increases, allowing more time to be dedicated to complex problems.

In education, the debate is especially heated. There are experiences that show that It is becoming increasingly difficult to distinguish whether a short essay has been written by a student or ChatGPTeven for experienced teachers and professional authors. In some experiments, teachers and experts failed to identify the true author.

In response to this, many educators are beginning to raise more oral assessment, classwork, and activities that demonstrate the student's thought processnot just the final product. Others propose using ChatGPT as a teaching tool: a starting point that the student must critique, correct, compare with real sources and improve.

Best practices for using ChatGPT without falling into its traps

Despite all these problems, ChatGPT is extremely useful if given the right role. The key is in combine good prompt design with rigorous human control of what it generatesespecially when there are academic, legal, or professional implications.

A first basic recommendation is Always verify facts, figures, references, and proper names. before using them. It is not enough for it to "sound good": you have to check with reliable sources (scientific articles, official legislation, reference books, specialized databases, etc.).

It is also convenient Do not base critical decisions on a single model response.When the matter is important, it is prudent to compare several opinions (other tools, human experts, documentation) and use the chat only as support or a draft generator.

The design of the instructions has a huge impact: Clear, specific, and well-structured prompts reduce ambiguity and tend to produce more useful outputsAsking them to explain their reasoning step by step, to include counterexamples, or to point out possible limitations can help detect errors.

Finally, using ChatGPT as generator of templates, outlines, drafts and lists of ideas that the person then works onInstead of simply accepting the final text, this saves time on the more mechanical aspects, but the final content is still reworked and validated by a human.

The experience of recent years shows that Generative artificial intelligence works best when we treat it as a powerful but fallible assistantWith a critical spirit, good judgment in choosing tasks, and a serious review system, it becomes a valuable ally instead of a silent risk.

Table of Contents

- Why it's crucial to know the limitations of ChatGPT

- Common errors and technical limitations of ChatGPT

- 7 tasks where ChatGPT fails (or is unreliable)

- 1. Logical reasoning and complex mathematics

- 2. Common sense, intuition, and human context

- 3. Long-term memory and project continuity

- 4. Supposed time savings that end up costing you more

- 5. Outdated data and lack of real-time connection

- 6. Variable performance and saturation

- 7. Restrictions, filters and biases

- Fundamental errors: why ChatGPT makes so many mistakes

- How ChatGPT trained and why that influences his mistakes

- Impact of ChatGPT on studies and the world of work

- Best practices for using ChatGPT without falling into its traps