- AI makes it possible to detect and respond to cyber threats and physical crimes with greater speed, accuracy and context.

- Attackers also rely on AI for fraud, deepfakes, and automating the exploitation of vulnerabilities.

- Protecting AI requires securing data, models, and APIs, with full visibility across hybrid and multicloud environments.

- Integrating security by design and focusing on resilience turns AI into a true competitive advantage.

La artificial intelligence applied to security It has become one of the biggest topics of conversation in businesses, public administrations, and law enforcement agencies. The shift to the cloud, hybrid environments, and the massive growth of data have completely changed the playing field, and attackers are taking advantage of this at breakneck speed.

At the same time, AI opens up a huge window of opportunity: from detect cyberattacks in real time This includes anticipating physical crimes in specific areas and automating tedious tasks in security operations centers. However, all this potential comes with very serious risks if the AI itself, its data, and the interfaces surrounding it are not properly protected.

The new threat landscape and why AI is key

The current cyber threat environment is much more complex and aggressive which was just a few years ago. The massive migration to the cloud and hybrid architectures has caused attack surfaces to skyrocket: now data is spread across on-premises data centers, different cloud providers, and edge environments, which greatly complicates control.

This change coincides with a clear cybersecurity professionals shortageIn the United States alone, there are hundreds of thousands of unfilled positions, resulting in overloaded teams with little time for in-depth research and forced to prioritize hastily.

The result is that the attacks are happening today. more frequent and more expensiveRecent reports place the average global cost of a data breach exceeding $4 million, with cumulative double-digit increases in just three years. When analyzing the impact of AI on these incidents, the difference is striking: organizations that do not use AI in their security strategy pay, on average, significantly more per breach than those that do.

Companies that have AI-based security capabilities They manage to cut the average costs of a data breach by hundreds of thousands of dollars. Even having partial or limited AI controls represents a significant saving compared to those who haven't invested anything in this area.

In this context, AI is not just “a bonus”: it is becoming a essential strategic piece to be able to monitor large volumes of security information, detect anomalous behavior, and respond to incidents before they escalate.

How cybercriminals use AI

The other side of the coin is that the same advances in AI that help with defense have also been quickly adopted by attackersThe ability to generate convincing fake content at low cost is changing fraud, disinformation, and even personal extortion.

On the one hand, advanced text generators allow you to create fake news, phishing emails And highly polished social engineering messages, tailored to the victim's context and written in a style that mimics journalists or business executives. We're no longer talking about emails riddled with errors, but rather highly credible communications.

On the other hand, the tools for creating video and audio deepfakes They've taken a giant leap forward. With specialized software, attackers can superimpose faces onto real videos (deepfaces) or clone voices (deepvoices) with a level of realism that easily fools anyone who isn't prepared.

An illustrative case is telephone fraud based on the voice cloning of a family memberThe criminals, after obtaining audio recordings of a person, train a model capable of imitating their tone, accent, and manner of speaking. They then call a relative, impersonating that family member, fabricate an emergency, and request an urgent money transfer. Upon recognizing the voice, the victim completely lowers their guard.

Beyond outright deception, AI is also used to automate vulnerability discoveryThis includes perfecting brute-force attacks against credentials or writing malicious code. Law enforcement agencies and organizations like the FBI have already detected a clear increase in intrusions related to the malicious use of generative AI, and many cybersecurity professionals acknowledge that a significant portion of the growth in attacks is due precisely to these new tools.

AI applications in cybersecurity: from endpoint to cloud

Faced with this increased risk, AI is also transforming the cyber defense across the entire technology stackCompanies are integrating machine learning capabilities into endpoint solutions, firewalls, SIEM platforms, and cloud-specific tools.

At the user end, the solutions of AI-powered endpoint security They continuously analyze the behavior of processes, files, and connections. Instead of relying solely on signatures, they learn what is "normal" on each device and detect suspicious deviations, such as the sudden execution of unknown scripts or the mass encryption of files typical of ransomware.

Next-generation AI-based firewalls (NGFWs with intelligent capabilities) are capable of inspect encrypted traffic, detect anomalous patterns and correlate events across multiple ports and protocols. This allows for the disruption of communications with command and control servers or the blocking of data exfiltration attempts that would otherwise go undetected.

At the global monitoring layer, the platforms of Security Information and Event Management (SIEM) And XDR solutions generate thousands of alerts daily. AI is used to prioritize, group related events, and turn that avalanche of raw data into a few high-impact incidents that truly deserve immediate attention.

Furthermore, they are deployed in cloud environments AI-based targeted security solutions These technologies identify misconfigurations, excessive permissions, or unusual data movement between regions and services. In addition, AI-powered Network Detection and Response (NDR) technologies monitor internal network traffic for behaviors typical of an attacker already inside the system.

Benefits of AI for security teams

Cybersecurity teams face a dual challenge: managing an immense volume of data and a increasing technical complexityHere, AI has become a key ally in doing more with the same resources.

One of the clearest benefits is the much faster threat detectionWhere previously an analyst had to manually review events, algorithms now learn attack patterns, user habits, and typical system behaviors. This allows them to identify critical incidents in a matter of seconds, even when they manifest as a combination of subtle signals scattered across different data sources.

Another key point is the reduction of false positives and false negativesUsing pattern recognition, anomaly detection, and continuous learning techniques, AI filters out the "noise" of irrelevant alerts and focuses on those that truly pose a threat. This prevents teams from burning out by responding to alerts that ultimately lead nowhere.

Generative AI is also changing the way analysts work with information. By being able to translate technical data into natural languageThe tools can produce clear reports that are easily shared with managers or other departments, explain what a specific vulnerability entails, or detail the recommended steps to correct it.

This ability to present information in an understandable way and guide the response makes it Junior analysts can take on more complex tasks without needing to master query languages or advanced tools from day one. In practice, AI generates remediation steps, concrete suggestions, and additional context that accelerates the learning curve.

Finally, AI provides a more complete view of the environment to aggregate and correlate data of security records, network trafficCloud telemetry and external threat intelligence sources help reveal attack patterns that would otherwise go unnoticed from a single system.

Authentication, passwords, and behavioral analysis

Beyond intrusion detection, AI is changing the way we Identities are protected and access is managedTraditional passwords still exist, but they are increasingly being combined with behavioral analysis models and additional factors powered by AI.

AI is used in systems of adaptive authentication They assess the context of each login: location, device, time, usage history, typing speed, and other factors. If anything seems out of the ordinary, the system increases the security level by requesting additional information or blocking the session.

In parallel, behavioral analysis solutions allow detect phishing attempts or compromised accounts by studying how users interact with applications, what resources they access, and how they navigate the network. A significant change in these patterns may indicate that someone is using stolen credentials.

Vulnerability management also relies on AI to go beyond the typical endless lists of flaws. The models analyze which vulnerabilities are most likely to be exploited based on the actual activity of the attackers, the availability of public exploits and the exposure of each asset, helping to prioritize patching efforts.

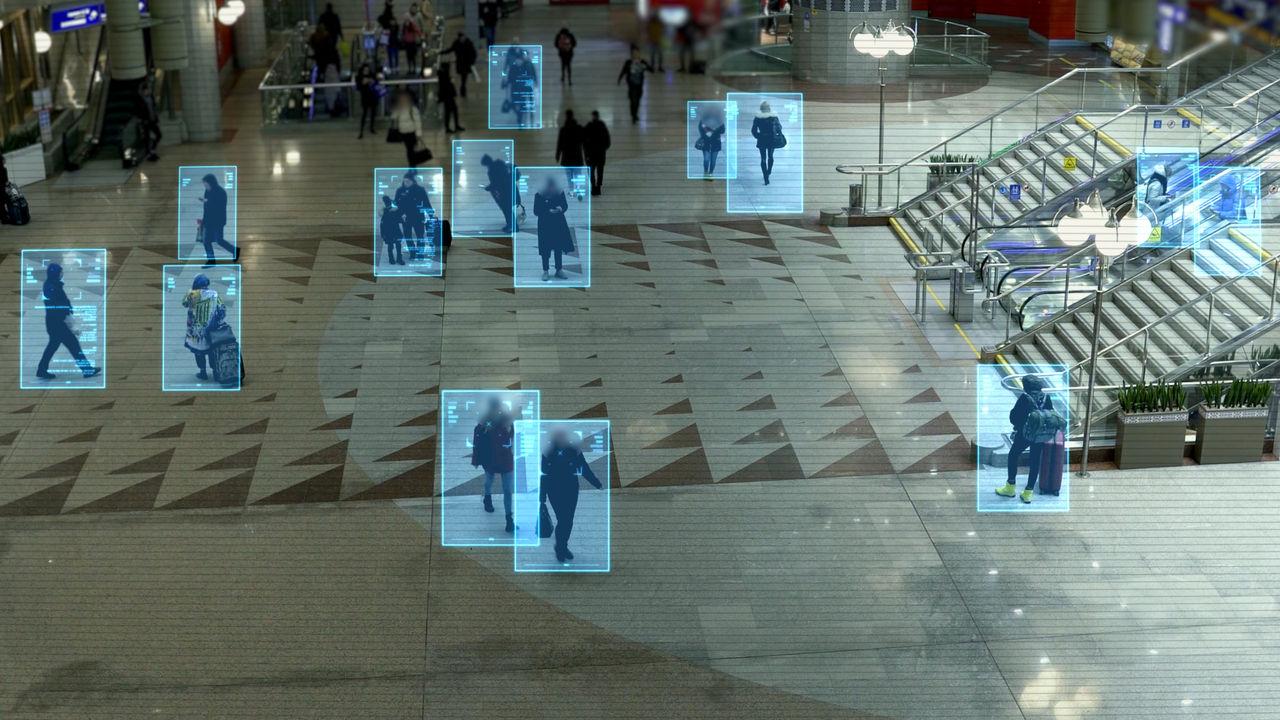

In physical environments, the surveillance with cameras and sensors It is powered by AI models capable of detect suspicious behaviorIdentifying license plates, recognizing movement patterns, or alerting to unusual gatherings. By combining this information with historical data and context, early warning systems can be activated in areas of high crime activity.

Crime prevention and prediction in the physical world

Outside of cyberspace, AI is also beginning to play an important role in the crime prevention in urban environmentsBy analyzing large volumes of historical data, authorities can identify patterns that help them better plan resources.

Among the most common applications is the analysis of crime patternsThis information helps determine what types of crimes are concentrated in specific areas, at what times they are most frequent, and how they evolve over time. It is used to adjust patrols, improve lighting, install additional cameras, and design targeted prevention campaigns.

AI is also used in early warning systems These systems combine real-time data (cameras, sensors, social media, even weather variables) to estimate when certain incidents are most likely to occur. While not infallible, they can help anticipate risk scenarios.

In the field of research, algorithms allow perform digital forensic analysis They use large volumes of forensic data (fingerprints, DNA, case records, arrest histories) to identify connections that would be very difficult to see at first glance. This allows them to link seemingly unrelated cases or refine the search for suspects.

All this deployment must be constantly balanced with the respect for privacy and human rightsThe risk of bias in training data is real: if models are fed with already biased police records, they can reinforce existing discrimination by "predicting" more crime in specific communities, even though the underlying problem is something else.

Risks and challenges: data security, model security, and API security

For AI to be trustworthy, security can no longer be limited to protecting servers or networks. It is essential. protect one's own intelligence: the data that feeds the models, the AI architectures, and the interfaces that make them accessible.

Models are only as good as their training data. If that data is... manipulated or biasedAI will make erroneous decisions. A very clear example can be seen in models used for personnel selection processes: if they are trained with histories where certain profiles have been systematically favored, AI can reinforce biases based on gender, race, or origin, discriminating against perfectly qualified candidates.

On a purely technical level, language models and other advanced AIs face new categories of attacks, such as prompt injectionIt consists of hiding malicious instructions in the data input to alter the model's behavior, circumvent constraints, or cause it to return harmful information.

Another major risk is the exposure of sensitive informationIf systems are misconfigured, they can reveal confidential customer data, trade secrets, or fragments of the training set itself, either directly or through techniques such as membership inference or model extraction.

The APIs used to access, train, or exploit AI models represent a critical front. Without one robust authentication, request limiting, and input validationThey become easy targets for brute-force attacks, mass scraping, or unauthorized changes to model parameters. It's no coincidence that a majority of companies have suffered API-related security incidents in recent months.

Complexity of hybrid environments and the need for total visibility

Most organizations run their AI solutions in hybrid infrastructures that combine public cloud, private cloud, on-premises, and increasingly, edge computing. This dispersion makes it difficult to maintain a clear view of where the data is, how it moves, and who has access to it at any given time.

Lack of visibility generates fragmented controls and blind spotsSome models are trained in one cloud, refined in another, and then deployed in different countries, with data moving from one environment to another. Without adequate observability, security breaches or regulatory non-compliance can easily arise without anyone detecting them in time.

Furthermore, unlike traditional software, AI models They evolve with useThey can adapt their parameters according to the new data they process, which makes it difficult to detect if they have been manipulated or if they have gradually deviated from their expected behavior.

Therefore, it is crucial to deploy continuous monitoring and advanced analytics, including security in your homelabRegarding the performance, responses, and decisions of the models, only in this way can strange patterns, subtle degradations, or attempted attacks that go unnoticed in traditional logs be identified.

This need for control also extends to the network and application layers. Web application and API protection technologies, combined with deep traffic inspection capabilities, enable the detection of suspicious queries, attempts to extract data or anomalous behavior towards AI services, blocking them before they compromise sensitive information.

Security by design and resilience as a competitive advantage

For AI to be a real business lever and not a constant source of scares, security has to integrate from day oneIt's not enough to just build the model, put it into production, and then patch it up in a hurry.

A mature strategy involves validate and protect the data In all phases, apply strict access controls, separate development, testing, and production environments, and cryptographically sign model artifacts to ensure their integrity throughout the lifecycle.

It is also key to design capabilities of automated detection and responseWhen a model behaves strangely, when an API receives an anomalous request pattern, or when an unexpected change is detected in a dataset, the system must be able to react quickly, isolate components, and notify the appropriate teams.

Resilience, understood as the capacity of AI to withstand attacks and recover without losing functionalityThis is becoming an essential trust factor for managers. If an organization knows its models are secure, observable, and compliant, it will have much more freedom to innovate and experiment with advanced use cases.

In practice, many companies combine specialized cybersecurity services with application protection and traffic management solutions that allow the application of defense-in-depth strategies: advanced traffic inspection, environment isolation, data exposure mitigation, model monitoring, and intelligent request routing based on cost, compliance, and performance.

All of this doesn't eliminate the need for human oversight, but it does drastically reduce manual and repetitive tasks. AI handles alert triage, event correlation, and information summarization, while specialists focus on understanding attackers' intent, investigating complex incidents, and designing more robust cyber defenses.

Ultimately, the use of AI in security requires assuming three basic ideas: that AI and security must move forward together.Protecting AI involves safeguarding data, models, and interfaces (not just infrastructure), and the resilience generated by a well-protected AI translates into a real competitive advantage over those who improvise as they go.

Artificial intelligence has moved beyond being a fringe experiment to become the driving force behind digital innovation in virtually every sector. Integrating it into security—while simultaneously ensuring adequate protection—allows for mitigating the impact of breaches, anticipating threats, improving crime prevention, and freeing human teams from much of the heavy lifting, provided a careful balance is maintained between effectiveness, ethics, and respect for human rights.

Table of Contents

- The new threat landscape and why AI is key

- How cybercriminals use AI

- AI applications in cybersecurity: from endpoint to cloud

- Benefits of AI for security teams

- Authentication, passwords, and behavioral analysis

- Crime prevention and prediction in the physical world

- Risks and challenges: data security, model security, and API security

- Complexity of hybrid environments and the need for total visibility

- Security by design and resilience as a competitive advantage